AI image generation is powerful. Like, actually powerful—not marketing-speak powerful. Type words, get pictures. The gap between thought and visual has never been smaller.

With that power comes... well, not exactly responsibility in the Spider-Man sense, but at least some awareness. Here's what we've learned from watching millions of generations happen on Artfelt.

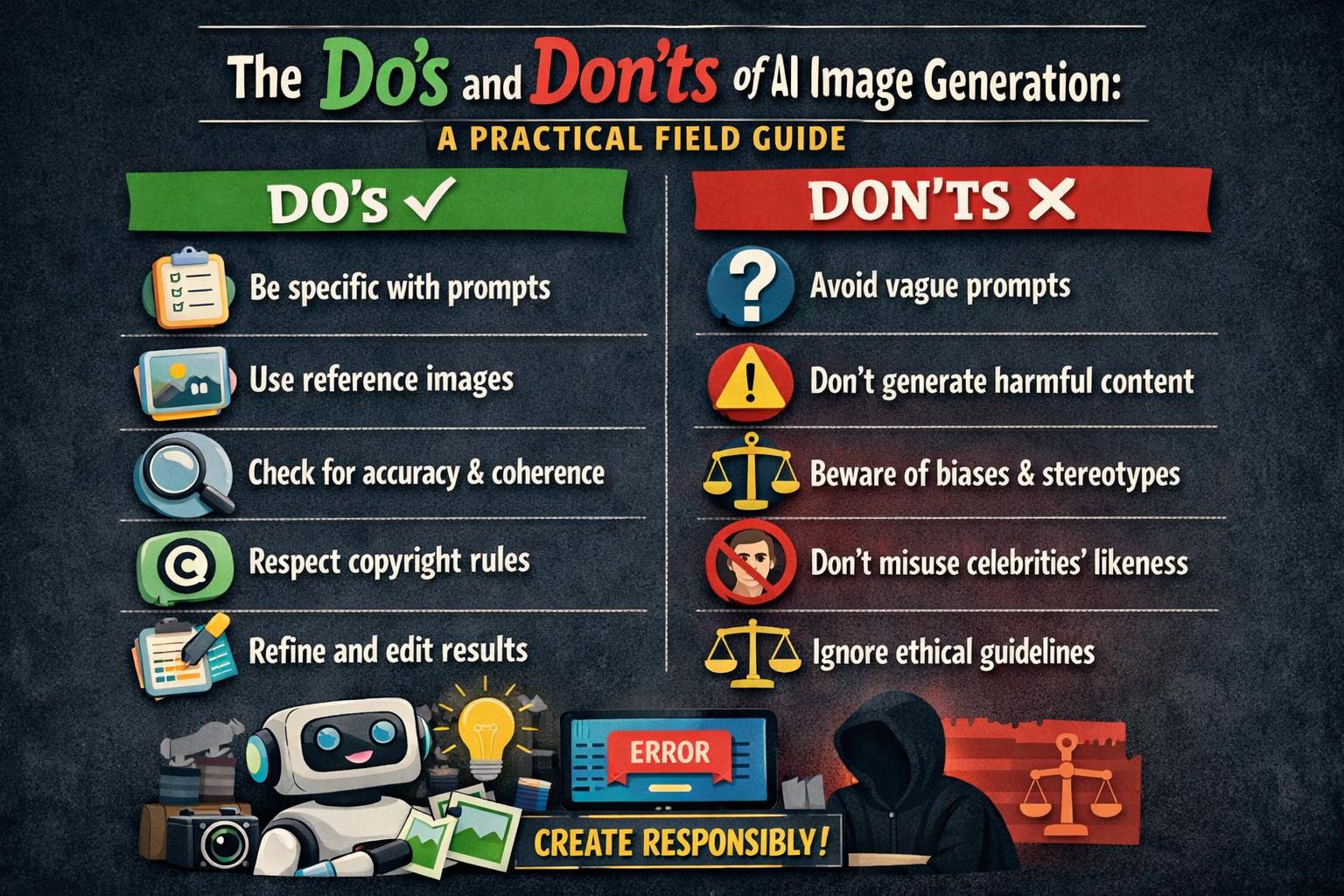

What Works

Do iterate relentlessly

Your first prompt probably won't be your best result. Or your second. Or your fifth. The creators getting the most striking results are often the ones generating 30 variations of the same concept, tweaking as they go.

Each generation teaches you something. The colors are right but the composition is wrong. Great—keep the colors, adjust the composition. This isn't failure; it's refinement.

Do learn when to stop

The opposite of iteration burnout is also real. Sometimes you chase a result that the model just won't give you. After 50 generations, you might need to accept that this particular model doesn't do what you're imagining, or your prompt is fundamentally misaligned with how the model thinks.

Walk away. Come back later. Try a different approach. Sometimes the best move is to start fresh with a clearer vision.

Do credit the tool when it matters

If you're posting AI art on social media just for fun, no one's going to demand a citation. But if you're using it commercially, in portfolios, in contexts where the medium matters—say it's AI-generated.

This isn't about shame. It's about honesty. The technology is cool. There's no reason to hide it.

Do learn your platform's quirks

Every model has strengths and weaknesses. SDXL handles artistic styles beautifully but struggles with text. Midjourney has a specific aesthetic that some love and others find too stylized. DALL-E 3 follows complex instructions precisely.

You'll get better results once you understand what your platform does well.

Do use negative prompts

Platforms that support negative prompts let you steer away from unwanted elements. On Artfelt and similar SDXL-based platforms, these are remarkably effective.

Instead of just hoping the model doesn't add extra fingers, tell it not to: extra fingers, deformed hands, mutated. Instead of hoping text comes out right, exclude text entirely: text, watermark, signature.

Do save your seeds

When you get a result that's close to what you want, note the seed number. It lets you generate variations on that specific image rather than starting from scratch each time. This is how you refine rather than random walk.

What to Avoid

Don't generate people without thinking about consent

This is the big one. Generating realistic images of real people—especially celebrities or private individuals—raises serious ethical questions. Even if it's technically legal in your jurisdiction, ask yourself: would this person want this image to exist?

The safe rule: avoid photorealistic depictions of identifiable individuals unless you have permission or a compelling editorial purpose.

Don't use AI to deceive

Generating fake news photos, fake evidence, fake screenshots—that's not creativity, that's manipulation. The technology makes it easy, which makes the restraint meaningful.

If you're creating satirical or artistic content that could be mistaken for real, label it. If you're not sure whether something crosses the line, it probably does.

Don't assume copyright safety

AI training data includes copyrighted images. Models can sometimes produce outputs that resemble existing works—sometimes specific artworks, often trademarked characters.

If you're using AI art commercially, avoid prompts that target specific artists' styles without transformation. If a generation looks suspiciously like an existing work, don't use it. The legal landscape is still developing, and "I didn't know" isn't much of a defense.

Don't expect perfection

AI isn't a magic button. It's a tool that requires skill to use well. The images you see on social media are often the best results from dozens or hundreds of generations.

If you're frustrated that your results don't look like what you imagined, two things: (1) your imagination might not match what the model is capable of, and (2) you're probably not seeing other people's failed attempts.

Don't ignore bias

Models learn from the internet. The internet has biases. The models reflect those biases.

Women are over-sexualized in default outputs. Certain ethnicities are stereotyped or underrepresented. Marginalized communities may not be represented well or at all.

You can counteract some of this through deliberate prompting. But the baseline bias exists. Be aware of it, and consider whether you're reinforcing or challenging it.

Platform-Specific Notes

Different platforms have different policies. On Artfelt, we:

- Filter prompts that request illegal or harmful content

- Aim for transparency about what gets blocked and why

- Don't sell your prompt data or train on your outputs without consent

- Allow anonymous creation because privacy enables experimentation

Know the policies of whatever platform you use. Some claim ownership of your outputs. Some train on everything you generate. Some have aggressive content filters that block legitimate creative work.

The Bottom Line

AI image generation is a tool. It can be used thoughtfully or carelessly, creatively or exploitatively. The technology doesn't make that choice—you do.

Most people use these tools for expression, exploration, and joy. Make art. Make memes. Make weird stuff that makes you laugh. Try things out. Learn what works.

Just... don't be a jerk about it. The technology will keep evolving. The norms around it will keep developing. Your choices contribute to what this medium becomes.