AI image generation is genuinely powerful. Type some words, get a picture. The simplicity belies the complexity of what's happening—and the complexity of the ethical questions it raises.

The technology isn't going away. The question isn't whether to use it, but how to use it well. Here's a practical framework for responsible AI art creation.

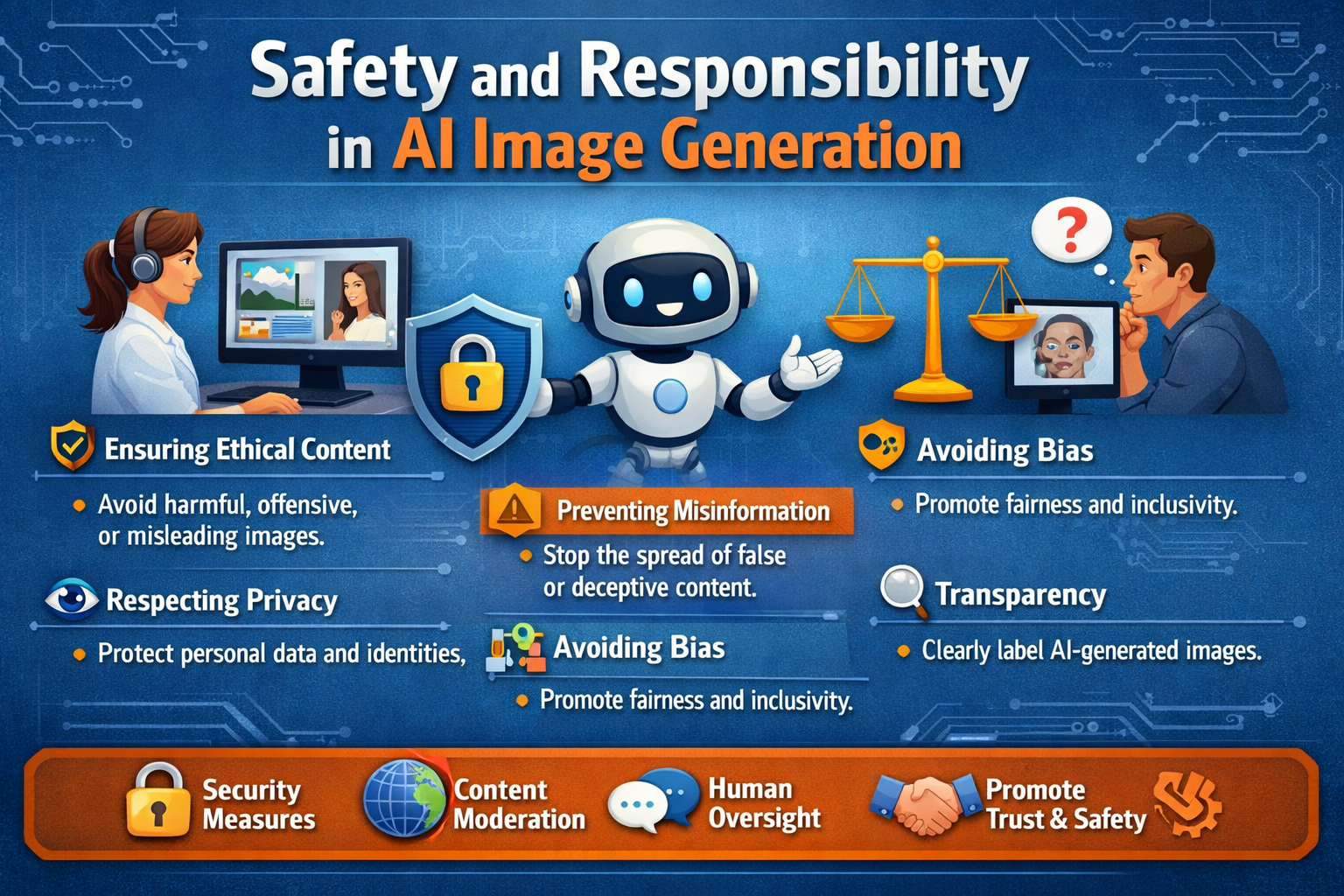

The Real Risks

Misinformation

Generated images can be realistic enough to fool people. A fake photo of a politician, a manufactured event, "evidence" of something that never happened. We've already seen AI images go viral as real news before being debunked.

The fix isn't technological—it's behavioral. Don't generate images designed to mislead. If you're creating satirical or artistic content that could be mistaken for real, label it. When in doubt, ask: could someone be harmed by believing this is real?

Consent and Likeness

Generating images of real people without their consent is a gray area that's rapidly become problematic. Celebrities and public figures have some reduced privacy expectations, but that doesn't mean anything goes. Non-consensual intimate imagery is now illegal in many jurisdictions and platforms ban it universally.

The safe rule: avoid generating realistic images of identifiable individuals unless you have clear permission or a strong editorial purpose. Even then, transparency is key.

Copyright and Style

AI training data includes copyrighted images. The resulting models can sometimes produce outputs that closely resemble existing works—from specific artworks to trademarked characters. Is that illegal? Courts are still deciding.

Practical guidance: don't deliberately generate copies of copyrighted works. If you're using AI for commercial purposes, avoid prompts that target specific artists' styles without transformation. And if a generation looks suspiciously like an existing work, don't use it.

Bias and Harm

Models learn from internet-scale data, which means they learn the biases present in that data. Women are over-sexualized. Certain ethnicities are stereotyped. Marginalized communities are underrepresented or misrepresented.

You can counteract this through intentional prompting, but the underlying bias is systemic. It's worth being aware of what the model "wants" to produce and questioning whether you should let it.

Platform Responsibilities

Users aren't the only ones with obligations. Platforms that offer AI generation have their own responsibilities:

Content Filtering

Most platforms employ some form of content filtering—blocking prompts that request illegal or harmful content, filtering outputs that violate policies. At Artfelt, we use a combination of prompt filtering and output screening to prevent the most harmful categories while preserving creative freedom.

The balance is delicate. Overfilter and you frustrate legitimate users (artists trying to create edgy but legal content, researchers studying model behavior, etc.). Underfilter and you expose yourself—and potentially others—to harm.

Transparency About Limitations

Platforms should be clear about what their models can and can't do, what safeguards are in place, and what happens to user data. If a platform claims "unlimited creative freedom" while quietly implementing aggressive filtering, users deserve to know.

Appeals and Reporting

When content is flagged or removed, users should have recourse. When harmful content slips through, users should be able to report it easily. The moderation loop should be responsive.

Practical Guidelines for Creators

Based on everything above, here's a practical checklist:

Label your work. If it's AI-generated and used in any context where the medium matters, disclose that it's AI-generated.

Respect consent. Think twice before generating realistic images of real people, especially in contexts that could affect their reputation.

Question your intent. Are you creating to express, explore, or communicate? Or are you creating to deceive, harm, or exploit? Intent matters.

Check your outputs. A quick scan for unintended resemblances, biases, or problematic content before you use or share a generation.

Consider context. The same image might be fine in an art gallery but inappropriate in a news context. Context shapes impact.

Stay informed. Laws and norms around AI generation are evolving rapidly. What's acceptable today may not be tomorrow, and vice versa.

The Platform Perspective

At Artfelt, we approach safety through a few principles:

- Default to openness, intervene on harm. We don't want to be the creativity police. We do want to prevent genuine harm.

- Transparent guardrails. We'll tell you what we filter and why. If something blocks your prompt, you should understand what category it fell into.

- Responsive moderation. When users report issues, we investigate quickly.

- No surveillance. We don't sell your prompt data, we don't train on your outputs without consent, and we collect minimal identifying information.

The goal isn't a sanitized environment—it's an environment where creativity flourishes alongside responsibility.

Finding the Balance

AI art isn't going away. The technology will get more powerful, more accessible, and more ubiquitous. The question is whether we develop healthy norms around its use—or whether we let the worst instincts define the medium.

Every tool can be misused. Photography can be used for surveillance or for art. Photoshop can be used for deception or for creativity. AI image generation is the same. The technology itself is neutral; the use is what matters.

We're optimistic. We see far more people using AI art for expression, exploration, and joy than for manipulation and harm. The responsible majority sets the norm. Our job—as users, as platforms, as a community—is to keep tilting the balance in that direction.